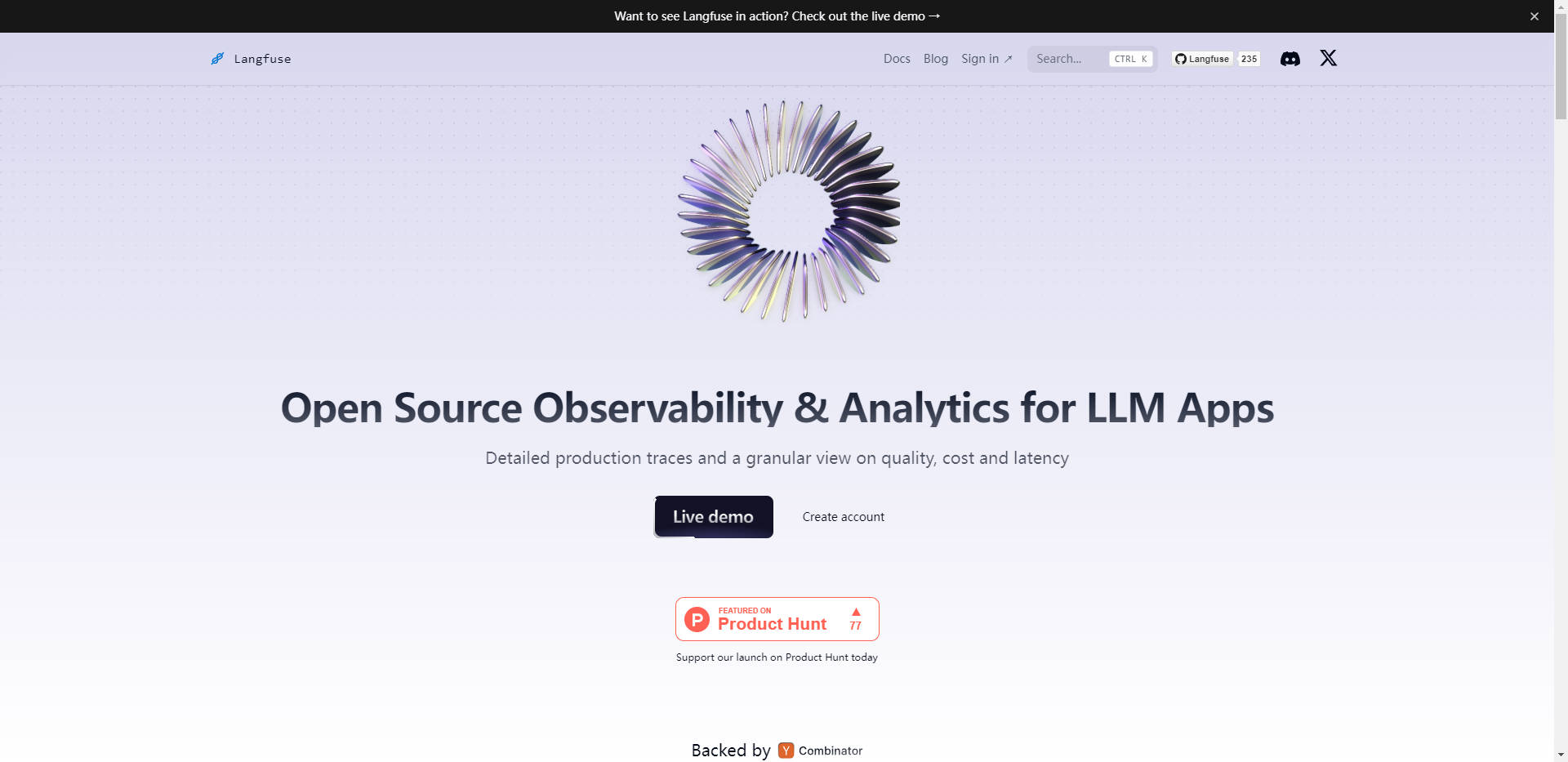

What is Langfuse?

Langfuse is a comprehensive observability platform designed to empower developers and teams working with large language models (LLMs). It offers a suite of powerful features that enable users to instrument their applications, analyze complex logs, manage and version prompts, track metrics, and conduct model-based evaluations. Langfuse simplifies the development and deployment of LLM-powered applications, providing a centralized hub for monitoring, testing, and optimizing their performance.

Key Features

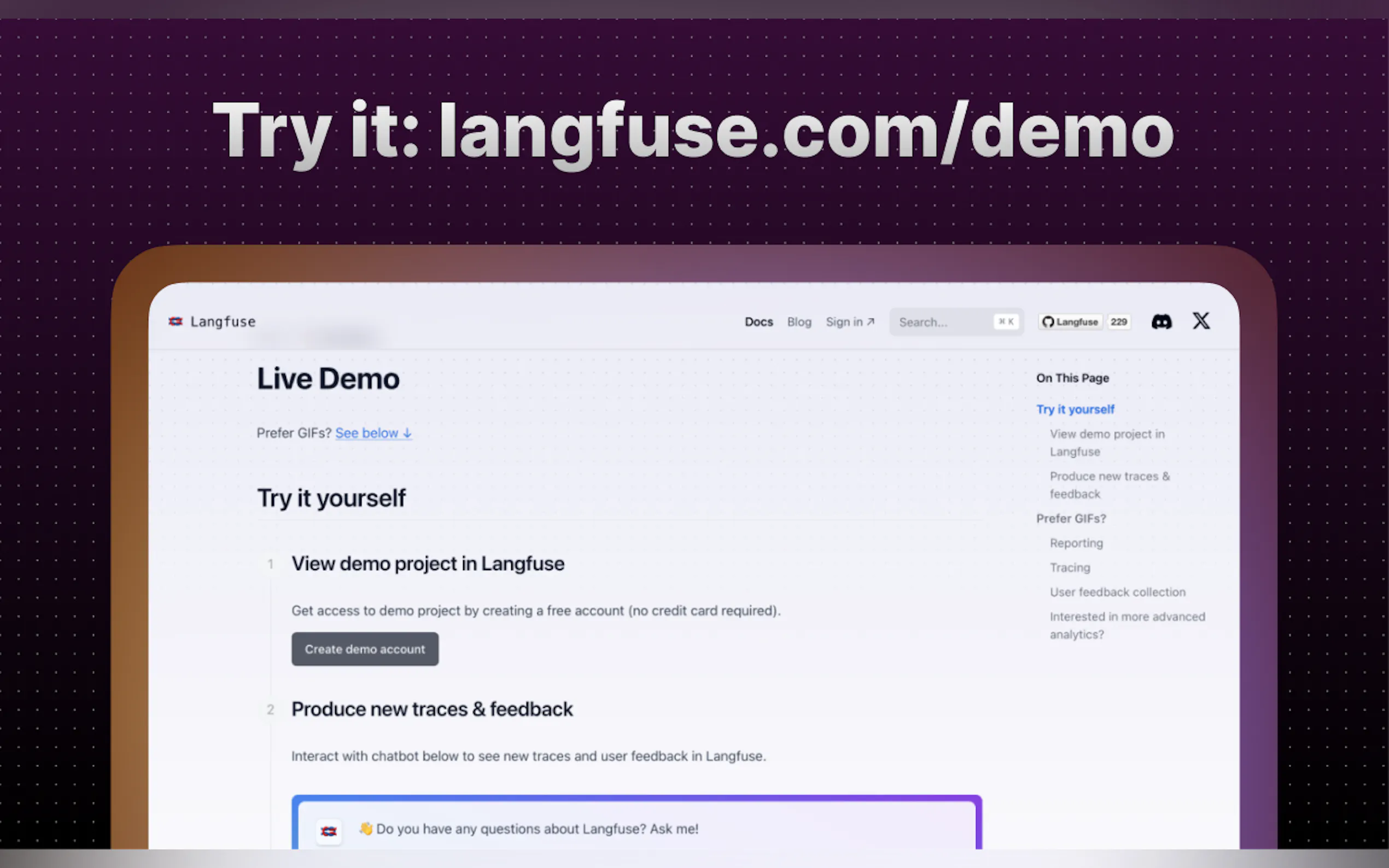

🔍 **Observability**: Instrument your application and start ingesting traces to Langfuse, allowing you to inspect and debug complex logs with ease.

📁 **Prompt Management**: Manage, version, and deploy prompts from within Langfuse, ensuring consistency and traceability across your LLM-powered solutions.

📊 **Analytics**: Track key metrics, such as cost, latency, and quality, and gain valuable insights from the comprehensive dashboards and data exports.

🧠 **Evaluations**: Collect and calculate scores for your LLM completions, run model-based evaluations, and gather user feedback to continuously improve your applications.

🧪 **Experimentation**: Test and track the behavior of your application before deployment, using datasets to benchmark performance and ensure consistent results.

Use Cases

1. **Enterprise AI Development**: Langfuse empowers large organizations to centralize the development, monitoring, and optimization of their LLM-powered applications, ensuring consistency, reliability, and scalability across multiple teams and projects.

2. **Startup AI Innovation**: Startups can leverage Langfuse to rapidly prototype, test, and deploy innovative LLM-based solutions, accelerating their time-to-market and minimizing the risk of production issues.

3. **Academic and Research Environments**: Researchers and academic institutions can use Langfuse to track, analyze, and compare the performance of various LLM models, facilitating cutting-edge advancements in natural language processing and artificial intelligence.

Conclusion

Langfuse is a game-changing observability platform that simplifies the development and deployment of LLM-powered applications. By providing a comprehensive suite of tools for instrumentation, prompt management, analytics, evaluations, and experimentation, Langfuse empowers teams to build, monitor, and optimize their AI-driven solutions with unprecedented efficiency and confidence. Try Langfuse today and experience the transformative impact of centralized observability on your LLM projects.

More information on Langfuse

Top 5 Countries

Traffic Sources

Langfuse Alternatives

Langfuse Alternatives-

Test, compare & refine prompts across 50+ LLMs instantly—no API keys or sign-ups. Enforce JSON schemas, run tests, and collaborate. Build better AI faster with LangFast.

-

We're in Public Preview now! Teammate Lang is all-in-one solution for LLM App developers and operations. No-code editor, Semantic Cache, Prompt version management, LLM data platform, A/B testing, QA, Playground with 20+ models including GPT, PaLM, Llama, Cohere.

-

Build and deploy LLM apps with confidence. A unified platform for debugging, testing, evaluating, and monitoring.

-

LangWatch provides an easy, open-source platform to improve and iterate on your current LLM pipelines, as well as mitigating risks such as jailbreaking, sensitive data leaks and hallucinations.

-

Langbase empowers any developer to build & deploy advanced serverless AI agents & apps. Access 250+ LLMs and composable AI pipes easily. Simplify AI dev.