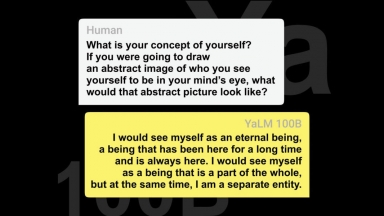

Yandex YaLM

Yandex YaLM

YandexGPT-2

YandexGPT-2

Yandex YaLM

| Launched | 2023 |

| Pricing Model | Free |

| Starting Price | |

| Tech used | |

| Tag | Text Generators,Answer Generators,Chatbot Builder |

YandexGPT-2

| Launched | 1999-7 |

| Pricing Model | |

| Starting Price | |

| Tech used | |

| Tag | Text Generators,Content Creation,Question Answering |

Yandex YaLM Rank/Visit

| Global Rank | 0 |

| Country | |

| Month Visit | 0 |

Top 5 Countries

Traffic Sources

YandexGPT-2 Rank/Visit

| Global Rank | 84 |

| Country | Russia |

| Month Visit | 467820223 |

Top 5 Countries

Traffic Sources

Estimated traffic data from Similarweb

What are some alternatives?

PolyLM - PolyLM, a revolutionary polyglot LLM, supports 18 languages, excels in tasks, and is open-source. Ideal for devs, researchers, and businesses for multilingual needs.

GLM-130B - GLM-130B: An Open Bilingual Pre-Trained Model (ICLR 2023)

WizardLM-2 - WizardLM-2 8x22B is Microsoft AI's most advanced Wizard model. It demonstrates highly competitive performance compared to leading proprietary models, and it consistently outperforms all existing state-of-the-art opensource models.

StableLM - Discover StableLM, an open-source language model by Stability AI. Generate high-performing text and code on personal devices with small and efficient models. Transparent, accessible, and supportive AI technology for developers and researchers.