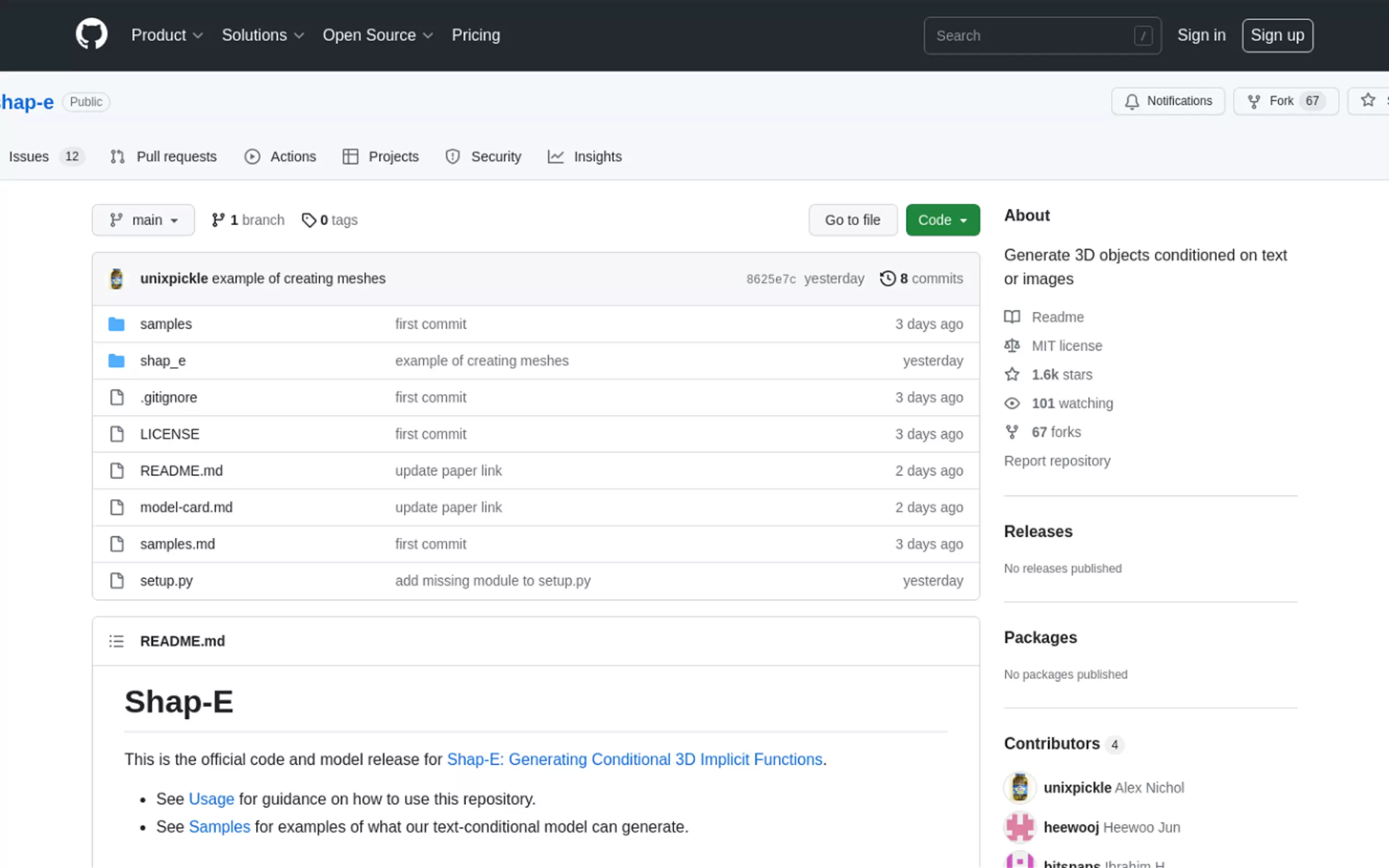

What is Shap-E?

Shap-E is an AI-driven tool that combines the power of text and image prompts to generate distinctive 3D objects. Whether you're an artist seeking inspiration, a designer exploring new forms, or an engineer seeking novel solutions, Shap-E empowers you to bring your imagination to life in three dimensions.

Key Features:

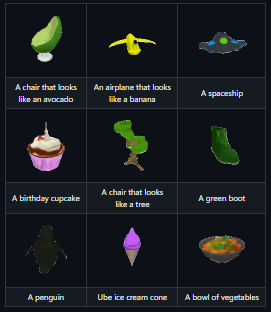

- Text-to-3D Creation: Convert textual descriptions into 3D models, transforming words into tangible objects.

- Image-to-3D Generation: Transform 2D images into 3D models, allowing you to add depth and volume to your creations.

- Latent Encoding: Encode 3D models or meshes into a latent representation, enabling further manipulation and editing.

Use Cases:

- Design and Art: Generate unique 3D models for games, animations, architecture, and product design.

- Engineering and Manufacturing: Create and refine prototypes, visualize mechanical parts, or explore design options.

- Education and Research: Illustrate scientific concepts, design learning aids, or conduct shape-based experimentation.

Conclusion:

Shap-E's ability to translate text and images into distinct 3D objects opens up new possibilities for creative expression, problem-solving, and knowledge exploration. With its user-friendly interface and accessible codebase, it becomes a valuable tool for a wide range of fields, empowering individuals to unlock their imagination and shape the world around them.

More information on Shap-E

Shap-E Alternatives

Load more Alternatives-

-

Create free 3D models from text or images with AI! Perfect for game developers, 3D printing, & animation. No sign-up, unlimited exports.

-

-

-

Use text to 3D and image to 3D to generate 3D mesh objects. Take your ideas from concepts to real life through remixing and referencing any of your previous results.