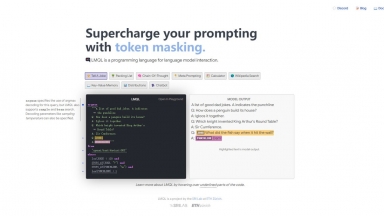

LMQL

LMQL

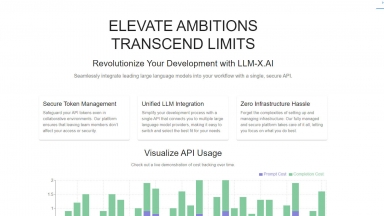

LLM-X

LLM-X

LMQL

| Launched | 2022-11 |

| Pricing Model | Free |

| Starting Price | |

| Tech used | Cloudflare Analytics,Fastly,Google Fonts,GitHub Pages,Highlight.js,jQuery,Varnish |

| Tag | Text Analysis |

LLM-X

| Launched | 2024-02 |

| Pricing Model | Free |

| Starting Price | |

| Tech used | Amazon AWS CloudFront,HTTP/3,Progressive Web App,Amazon AWS S3 |

| Tag | Inference Apis,Workflow Automation,Developer Tools |

LMQL Rank/Visit

| Global Rank | 2509184 |

| Country | United States |

| Month Visit | 8348 |

Top 5 Countries

Traffic Sources

LLM-X Rank/Visit

| Global Rank | 18230286 |

| Country | |

| Month Visit | 218 |

Top 5 Countries

Traffic Sources

Estimated traffic data from Similarweb

What are some alternatives?

LM Studio - LM Studio is an easy to use desktop app for experimenting with local and open-source Large Language Models (LLMs). The LM Studio cross platform desktop app allows you to download and run any ggml-compatible model from Hugging Face, and provides a simple yet powerful model configuration and inferencing UI. The app leverages your GPU when possible.

LLMLingua - To speed up LLMs' inference and enhance LLM's perceive of key information, compress the prompt and KV-Cache, which achieves up to 20x compression with minimal performance loss.

LazyLLM - LazyLLM: Low-code for multi-agent LLM apps. Build, iterate & deploy complex AI solutions fast, from prototype to production. Focus on algorithms, not engineering.

vLLM - A high-throughput and memory-efficient inference and serving engine for LLMs