The Ultimate Guide to Installing and Leveraging Ollama on Windows

Embark on a journey to seamlessly integrate the power of AI into your Windows environment with our comprehensive guide on installing and utilizing Ollama – the AI model revolutionizing the tech community.

Table of Contents

Introduction to Ollama

Reasons to Choose Ollama for AI Development

Step-by-Step: Installing Ollama on Windows

Maximizing Ollama's Performance on Windows

Final Thoughts on Ollama

FAQs

Introduction to Ollama

Ollama is a revolutionary AI and machine learning platform designed to simplify the development and deployment of AI models. It provides a user-friendly environment that caters to developers, researchers, and AI enthusiasts by making advanced tools more accessible and manageable.

Reasons to Choose Ollama for AI Development

Ollama stands out with its unique features that solve many challenges faced by AI developers:

Automatic Hardware Acceleration: Leveraging the best hardware resources, Ollama delivers optimized performance whether you're using an NVIDIA GPU or a CPU with AVX/AVX2.

No Virtualization Needed: The platform eliminates the need for virtual environments, providing a straightforward setup process.

Comprehensive Model Library: Access an extensive collection of models, including vision models like LLaVA 1.6, without the hassle of independent sourcing and configuration.

Always-On API: Ollama's API runs quietly in the background, ready to elevate your projects with AI capabilities.

Step-by-Step: Installing Ollama on Windows

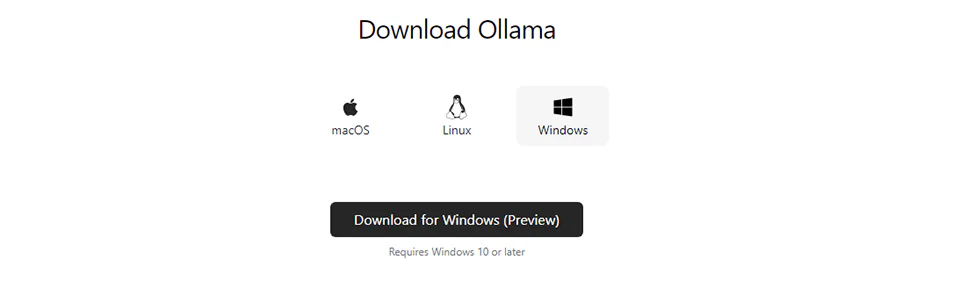

1. Download and Installation

Download: Navigate to the Ollama Windows Preview page and initiate the download of the executable installer.

Installation: Locate the .exe file in your Downloads folder, double-click to start the process, and follow the prompts to complete the installation.

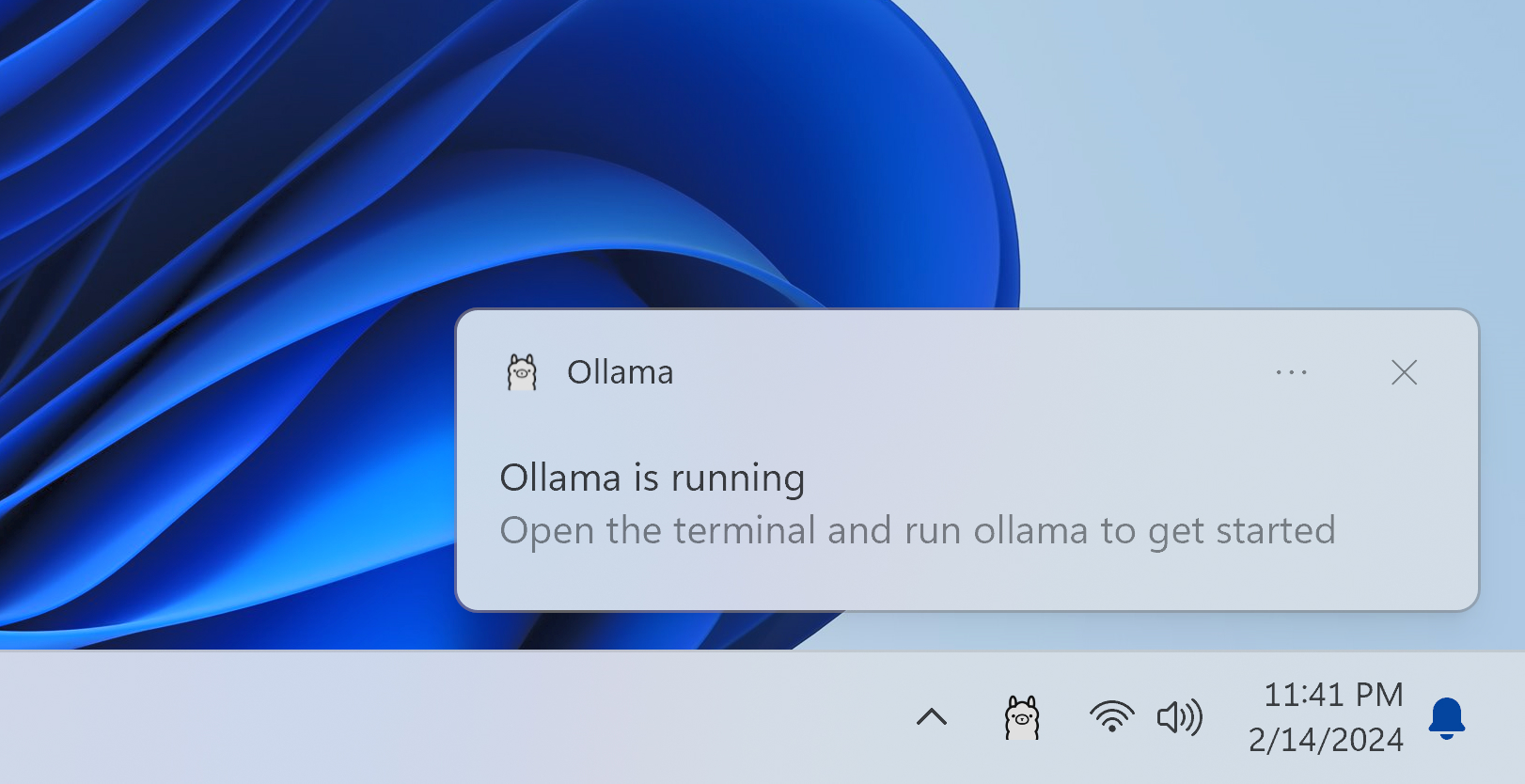

2. Running Ollama

Open Terminal: Use

Win + Sto search for Command Prompt or PowerShell, and launch it.Execute Ollama Command: Input

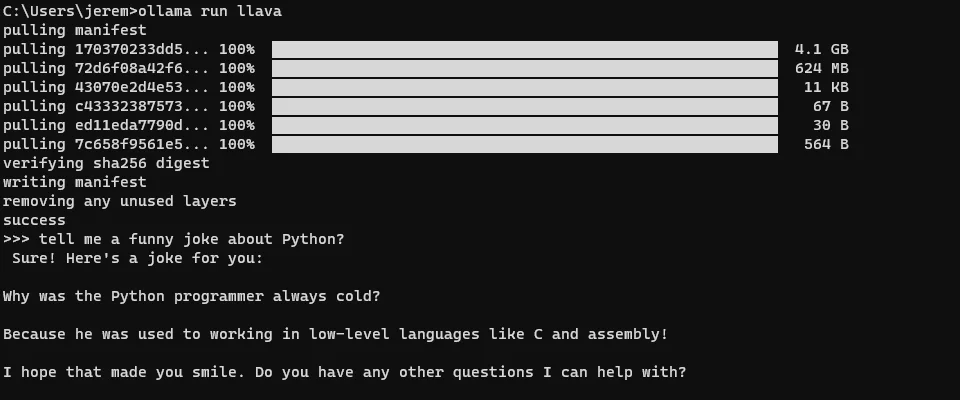

ollama run llama2to initialize the platform and prepare the model for interaction.

3. Utilizing Models

Interact directly with text-based models or use the drag-and-drop feature for image-based models, such as ollama run llava1.6, to process images.

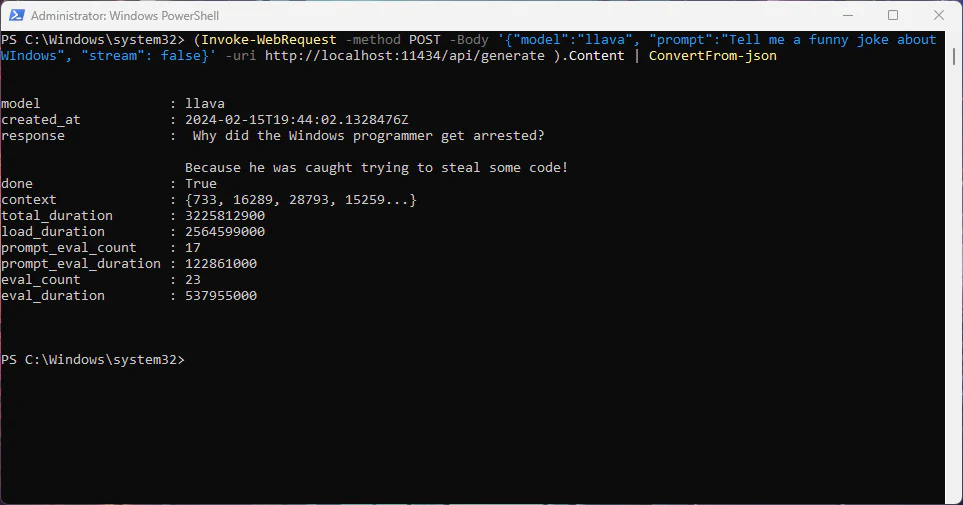

4. Connecting to Ollama API

Access API: Default available at

http://localhost:11434.Sample API Call: Make an HTTP request, using curl for example, to send prompts to the model and receive responses.

Maximizing Ollama's Performance on Windows

Here are tips to get the most out of Ollama:

Hardware Specifications: Align your system's hardware with Ollama's recommendations for optimal performance.

Update Drivers: Ensure your GPU drivers are current.

System Resources: Close unnecessary apps to allocate more resources to Ollama.

Right Model Selection: Balance model size with task complexity for efficiency.

Final Thoughts on Ollama

Ollama is reshaping the landscape of AI development with its ease of use, hardware optimization, and extensive model library. Delve into the platform, test different models, and discover new ways to revolutionize your projects.

FAQs

What makes Ollama unique compared to other AI platforms?

Ollama simplifies AI development with automatic hardware acceleration, a user-friendly setup that bypasses virtualization, and an extensive model library with an always-on API.

Can Ollama optimize performance on any Windows system?

While Ollama aims to optimize performance on a variety of systems, best results are achieved on systems that meet its recommended hardware specifications.

How do I access Ollama's model library?

After installation, use Ollama commands in the terminal to access and interact with the models directly.

What if I face installation issues or model loading errors?

Ensure your Windows system is updated, you have the correct permissions, and check for Ollama updates or patches.

Can Ollama significantly improve my AI project's efficiency?

Yes, Ollama's automatic hardware acceleration and comprehensive model library can greatly enhance the efficiency and capability of your AI projects.