DLRover

DLRover

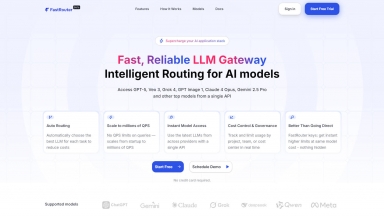

FastRouter.ai

FastRouter.ai

DLRover

| Launched | |

| Pricing Model | Free |

| Starting Price | |

| Tech used | |

| Tag | Software Development,Data Science |

FastRouter.ai

| Launched | 2025-03 |

| Pricing Model | Free Trial |

| Starting Price | |

| Tech used | |

| Tag |

DLRover Rank/Visit

| Global Rank | |

| Country | |

| Month Visit |

Top 5 Countries

Traffic Sources

FastRouter.ai Rank/Visit

| Global Rank | 13018322 |

| Country | United States |

| Month Visit | 1224 |

Top 5 Countries

Traffic Sources

Estimated traffic data from Similarweb

What are some alternatives?

LoRAX - LoRAX (LoRA eXchange) is a framework that allows users to serve thousands of fine-tuned models on a single GPU, dramatically reducing the cost of serving without compromising on throughput or latency.

Ludwig - Create custom AI models with ease using Ludwig. Scale, optimize, and experiment effortlessly with declarative configuration and expert-level control.

Activeloop - Activeloop-L0: Your AI Knowledge Agent for accurate, traceable insights from all multimodal enterprise data. Securely in your cloud, beyond RAG.

ktransformers - KTransformers, an open - source project by Tsinghua's KVCache.AI team and QuJing Tech, optimizes large - language model inference. It reduces hardware thresholds, runs 671B - parameter models on 24GB - VRAM single - GPUs, boosts inference speed (up to 286 tokens/s pre - processing, 14 tokens/s generation), and is suitable for personal, enterprise, and academic use.