VLLM

VLLM

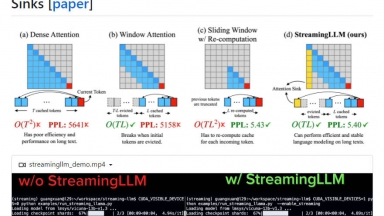

StreamingLLM

StreamingLLM

VLLM

| Launched | |

| Pricing Model | Free |

| Starting Price | |

| Tech used | |

| Tag | Software Development,Data Science |

StreamingLLM

| Launched | 2024 |

| Pricing Model | Free |

| Starting Price | |

| Tech used | |

| Tag | Workflow Automation,Developer Tools,Communication |

VLLM Rank/Visit

| Global Rank | |

| Country | |

| Month Visit |

Top 5 Countries

Traffic Sources

StreamingLLM Rank/Visit

| Global Rank | |

| Country | |

| Month Visit |

Top 5 Countries

Traffic Sources

Estimated traffic data from Similarweb

What are some alternatives?

EasyLLM - EasyLLM is an open source project that provides helpful tools and methods for working with large language models (LLMs), both open source and closed source. Get immediataly started or check out the documentation.

LLMLingua - To speed up LLMs' inference and enhance LLM's perceive of key information, compress the prompt and KV-Cache, which achieves up to 20x compression with minimal performance loss.

LazyLLM - LazyLLM: Low-code for multi-agent LLM apps. Build, iterate & deploy complex AI solutions fast, from prototype to production. Focus on algorithms, not engineering.

OneLLM - OneLLM is your end-to-end no-code platform to build and deploy LLMs.