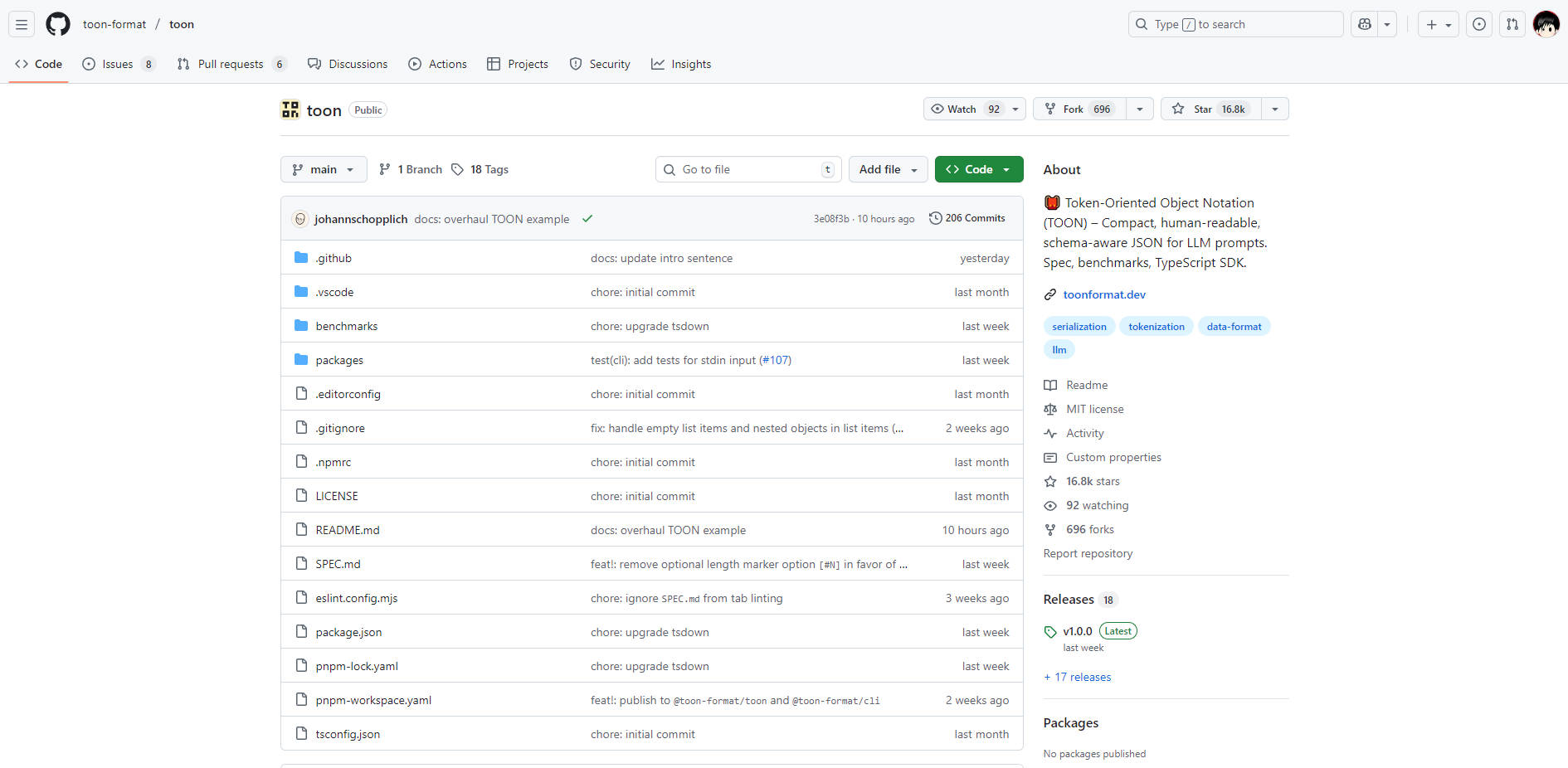

What is TOON?

TOON is a compact, schema-aware data serialization format designed specifically to reduce the cost and improve the reliability of large data inputs for Large Language Models (LLMs). While maintaining complete fidelity to the standard JSON data model, TOON employs a human-readable syntax that dramatically minimizes token count, making it the ideal translation layer for passing structured data efficiently into your LLM pipelines.

If you are working with large datasets, configuration objects, or uniform arrays that push the limits of your context window, TOON provides a powerful mechanism to lower API costs and ensure your data is parsed reliably by the model.

Key Features

TOON is engineered to provide the structural integrity of JSON with the token efficiency of lightweight formats, leveraging explicit guardrails that are highly effective for LLM consumption.

💸 Significant Token Reduction: Achieve typical token savings of 30–60% compared to formatted JSON, particularly when handling large, uniform arrays of objects. This reduction directly translates to lower operational costs and the ability to fit significantly more data within a fixed context window.

🤿 LLM-Friendly Guardrails and Validation: Unlike raw formats like CSV, TOON includes explicit structural metadata, such as array lengths (e.g., items[3]) and field headers ({sku,qty,price}). These explicit guardrails enable the model to reliably track structure, reducing parsing errors and improving the accuracy of data retrieval tasks.

🧺 Efficient Tabular Arrays: TOON's "sweet spot" is its tabular array format, which combines the structure of objects with the efficiency of CSV. By declaring keys only once in the header, you can stream subsequent data as simple, comma- or tab-separated rows. This minimal syntax removes the redundant punctuation (braces, brackets, and most quotes) that makes standard JSON token-expensive.

🔗 Optional Key Folding for Nested Data: Manage deeply nested objects efficiently using optional key folding. This feature collapses single-key wrapper chains into dotted paths (e.g., data.metadata.items) to further reduce indentation overhead and token count without sacrificing the original structure.

Use Cases

TOON serves as a crucial optimization layer between your programmatic data structure (JSON) and your LLM interaction layer.

Cost-Efficient Data Analysis and Summarization: When feeding large volumes of structured logs, financial transactions, or user event data to an LLM for summarization or pattern recognition, encoding the input as TOON can drastically cut the cost of the prompt input. For example, encoding 100,000 lines of uniform event logs in TOON rather than JSON can reduce token usage by over 20%, allowing you to process more data per dollar.

Reliable Output Generation and Function Calling: Improve the success rate of structured output tasks. By instructing the model to generate responses in TOON format, you leverage the explicit array length and field headers, which act as strong hints. This reduces the LLM’s tendency to omit fields or miscount items, ensuring the generated data remains valid and easy to parse back into JSON using the TOON SDK.

Modernizing Existing JSON Pipelines: If your backend uses JSON for internal communication but feeds data to an LLM service, use the TOON TypeScript SDK or CLI to automatically encode the data just before API submission and decode the response upon receipt. This provides immediate, measurable cost savings without requiring you to rewrite your core data models or switch away from the JSON standard.

Unique Advantages: Benchmarked Efficiency and Accuracy

TOON is not just a compact format; it is optimized specifically for LLM comprehension and token efficiency, resulting in superior performance across common models.

| Metric | TOON Performance | vs. Formatted JSON | Insight |

|---|---|---|---|

| Token Efficiency (Avg.) | 2,744 tokens | 39.6% Fewer Tokens | Significantly reduces API costs and increases the usable context window size. |

| Retrieval Accuracy (Avg.) | 73.9% | +4.2% Higher Accuracy | Explicit structure (length and fields) helps LLMs parse data more reliably, leading to better comprehension and fewer retrieval errors. |

| Efficiency Ranking | 26.9 (Accuracy per 1K Tokens) | Highest Ranking | TOON delivers the best balance of model accuracy and token cost across diverse data structures. |

In head-to-head benchmarks across models like Gemini, Claude, and GPT, TOON consistently demonstrates that its unique syntax delivers information to the model in the most efficient and robust manner possible.

When to Use Other Formats

While TOON excels at structured data, it is important to understand its limitations to maximize efficiency:

- Deeply Nested or Highly Non-Uniform Data: If your data has many nested levels and few or no uniform arrays (e.g., complex configuration files), standard compact JSON may use fewer tokens.

- Pure Tabular Data: For flat tables with no nesting or structural metadata requirements, CSV remains the most token-efficient format, though TOON adds only a minimal 5–10% overhead to provide crucial structure and validation.

- Latency-Critical Local Models: In some latency-critical environments (especially local or quantized models), the simplicity of compact JSON might lead to a faster Time-To-First-Token (TTFT). Always benchmark your exact deployment if micro-latency is your absolute priority.

Conclusion

TOON offers a professional, verifiable solution for the persistent challenge of LLM data input: high token costs and inconsistent parsing. By translating your JSON into this compact, schema-aware format, you gain immediate, measurable benefits in both operational efficiency and data retrieval accuracy.